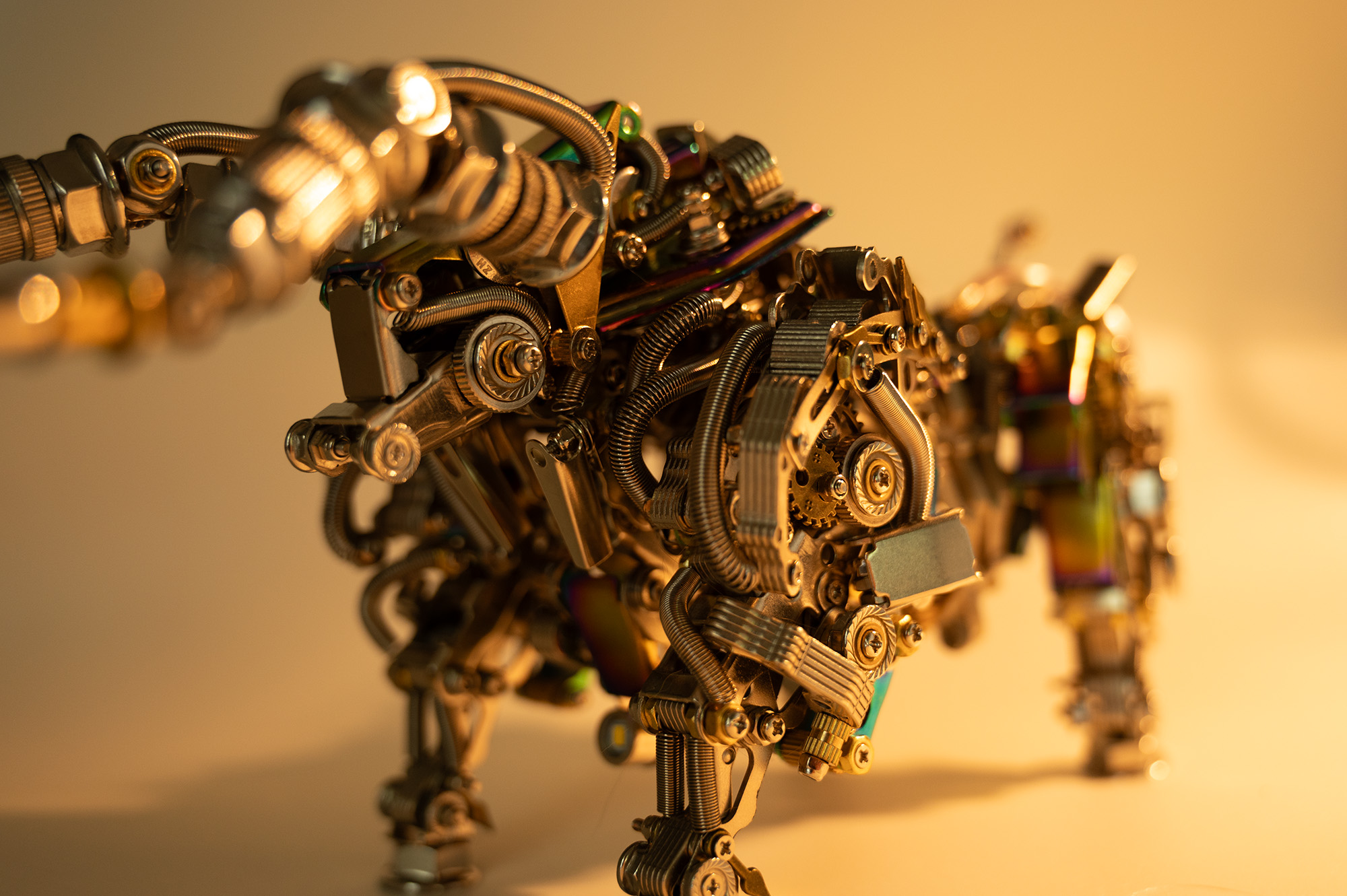

Making a metal bull model almost killed me.

It is a known element of ADHD fixation that the sufferer tends to Do The Next Thing without regard for the context, so it was a full minute before I realized the hand trying to force a screw two more millimeters through an unyielding socket was the same hand holding a screwdriver pointed at my eye, three inches above it.

It didn’t look too difficult in the advertisement. I assume that unlike me, whoever built the floor model was supplied with all the correct pieces, and those pieces matched the illustrations in the manual. I also have to assume whatever manual they used was written in its author’s native tongue. The meaning of “Do not be afraid of accessories will be bad.” can be sussed with a pictorial aid; “Can the thumb.” remains a mystery. Some instructions aren’t translated at all, putting my iPhone camera’s close focus to the test as I try to get Google Translate to read 2pt Mandarin. I’m not convinced any human had a role in creating this. More likely it’s the rogue output of a forgotten automated factory whose last inhabitant forgot to turn off the wifi.

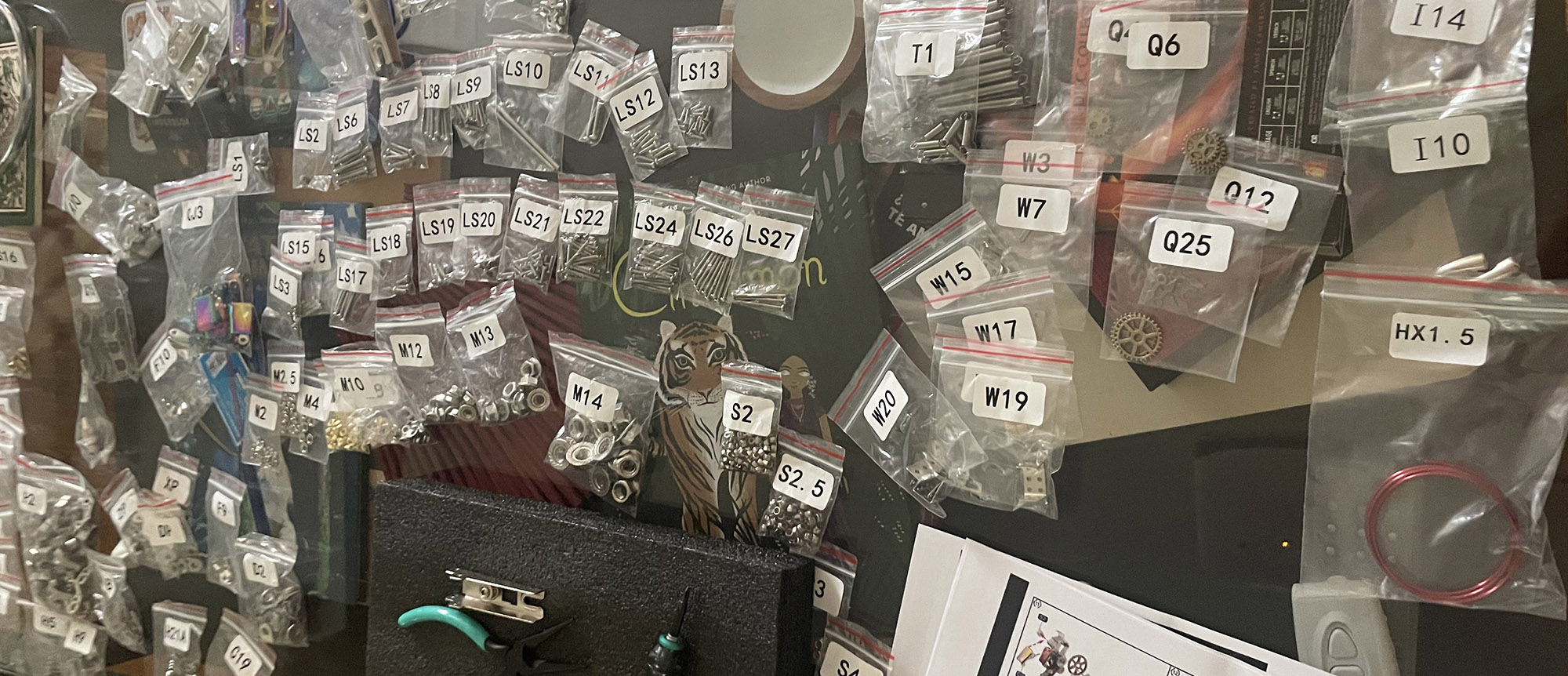

“Make sure you have all the correct pieces” my ass.

I cut my modelling teeth on Lego. Orderly, simple, instructions broken down with children in mind. The most painful thing you can do to yourself during Lego assembly is accidentally press two four-pip flats together. Working on this scrap metal bestiary has drawn blood twice. Each set comes with perfectly good tools, which I toss in a drawer in favor of the ludicrously high end tools I’ve gathered over the years, because those at least give me even odds of getting through a step on the third try.

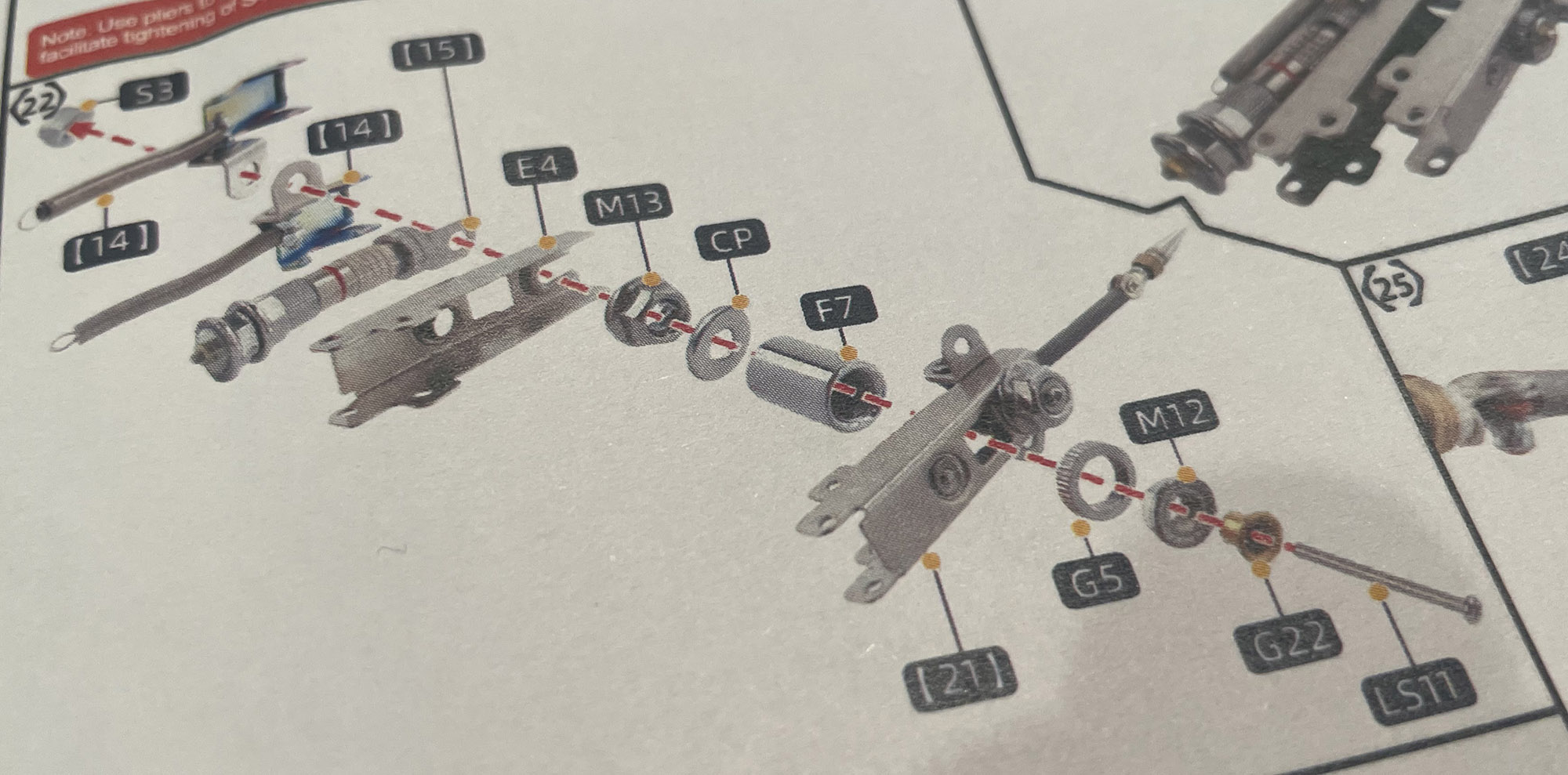

On the day I lose all hope, I will go over each set of instructions to see if they really had to be as arduous as they are. Perhaps they could have mentioned you need to keep these two pieces angled for a step three pages away. Or that you have to force the headplate over the bolt-and-nut pair of the piece below it to render the process logically tenable. Some steps take me half an hour, as I try to find inventive ways of holding fifteen parts together after threading a screw in and out of so many prongs and washers it feels like weaving.

You try holding this nonsense together with one hand.

Everything I’ve learned about engineering appears to have been wrong, as I bend and twist and force things together and into position, stripping screws in the hopes they’ll stay put. Be it poor illustration, poor comprehension, or physical law, some parts will not go where they’re supposed to go, and I have to shrug and hope there’s enough right to keep it standing in the end.

Some of the pieces are so small I’ve lost them to quantum tunneling. The huge hands that so often feel like another gift from the genetic lottery are active liabilities in this project: They won’t fit where they need to be. I have to pick up nuts and washers with tweezers and needle nose pliers and hope I don’t drop them somewhere else my fingers won’t fit.

That’s not even the only reason I’m congenitally ill-equipped for this project. My family starts shaking as we get older. Doctors call it “idiopathic tremor” which is doctor speak for “sucks to be you” when they don’t have anything useful to say. It’s accelerated over the last few years, to the point where I’m tempted to record the following phrases:

“No it’s not Parkinson’s.”

“No it’s not alcohol.”

“Yes, I drink too much but not that much.”

“No I haven’t tried a beta blocker, I might.”

“No I haven’t tried CBD, and I won’t.”

“Your MOM needs an essential oil.”

If it’s a bad spell, I can’t do certain things, and there’s no indication when or if I’ll be able to do them again. I ask my wife for help, and if she can’t help, I have to give up. I don’t mind asking people for help, but manual dexterity was my THING. It’s half of why I’m Good At The Things. Now it’s bleeding away in the middle of my life.

All of which contribute to the next reason this all seems like a terrible idea: My anger issues leak out in the small frustrations. Death of a loved one? I’ve got tools for that. Stranded 600 miles from home? Been there, worked it out. Roommate left the dishtowel on the counter? Scream myself hoarse, complain to everyone I know for the next ten years. Website takes more than thirty seconds to load? Slam laptop shut, taxes are for suckers anyway.

The process of building this model was composed of nothing but tiny, meaningless, unnecessary frustrations. So it might come as a surprise that this was the second of these that I’ve done.

I ordered two at once, and was prepared to consign the second to the ever-filling waste bin of projects after suffering through the first. I’m not one for hard work; even my obsessions and self-flagellation are humbled before a bag of Cheetos and a Marvel movie. There are TV shows I deem too taxing to watch after a long day. These models are all but designed to undo me personally, and I had to do at least one more, because they are training.

If my hands don’t work properly, I have to find workarounds. I have to clamp things in certain ways. I found that if I could hold a nut with the pliers in a particularly difficult position and use a powered screwdriver on the screw, I could eventually get the thread to catch despite my shaking. I found new ways to sit and breath to minimize the shaking. All the medications I know of to deal with my sucks-to-be-you tremors conflict with other medications I may someday have to take, so at least I’ll have some practice at managing things I’ll have to do when my body isn’t cooperating.

Most important, this is a master class gauntlet of hellfire in managing frustration. That’s all it is. Hours and hours and hours of pointless torture. At the tender age of 43, I’ve slammed my fist on a glass desk because a video game got too hard, and that’s barely acceptable behavior for a five year old. I’m in and out of therapy for the underlying causes of that behavior, but nothing has been so effective at curbing it as spending twenty hours intimately enagaged with the purest form of the things that set me off: the unexpected failures that unearth the feeling of all the real failures buried by explanations.

I probably won’t do another one of these models, because there’s a real risk of injury. But they did make me contend with things I have to face and things I should face. 5 out of 5; would not buy again.

Shockingly, even Million Ants the Comically Destructive Cat gave me a break. I think he decided I was suffering enough.